Yale scientists reveal shared logic behind natural and artificial networks

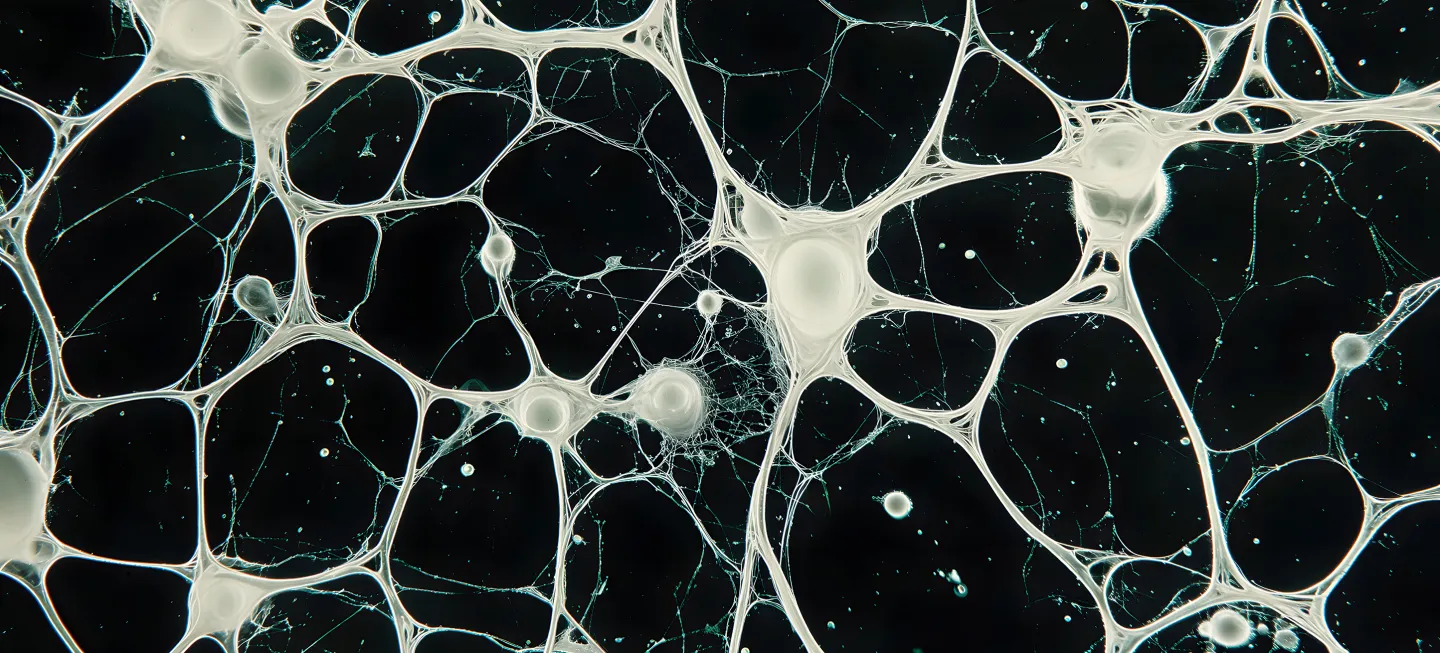

From the brain circuits and biochemical networks in our cells to AI platforms, both natural and engineered information-processing networks share a remarkably similar architecture that uses different timescales to figure things out, Yale researchers report in a new study in Cell Systems. It’s an insight that could help us better understand both biology, and our current efforts in AI.

Synaptic circuits, cellular signaling pathways, and artificial neural networks may seem worlds apart, but surprisingly, they share a common strategy for organizing and processing information. All of these complex systems, the study finds, appear to have converged on the same solution: a hierarchical arrangement of timescales. In this structure, signals are processed quickly at the point of entry, but this processing slows as they travel through deeper layers of the network.

“We realized that these networks, even though some of them are biological and natural and some of them are engineered, they're evolving or training to do the same thing.

Andre Levchenko

John C. Malone Professor of Biomedical Engineering & Director of the Yale Systems Biology Institute

“We were surprised by that at first, but then we realized that these networks, even though some of them are biological and natural and some of them are engineered, they're evolving or training to do the same thing,” said Andre Levchenko, the John C. Malone Professor of Biomedical Engineering and the Director of the Yale Systems Biology Institute on the West Campus.

These systems, Levchenko says, all feature components that process information at different rates and to varying depths. For instance, our brains are very good at responding quickly to certain types of information. When someone throws you a ball, you move swiftly to catch it.

“It's a very quick response, quick analysis,” he said. “It doesn't have any memory, though. It cannot process information on its own over long periods of time. It's a very quick here-and-now type of thing that may otherwise suffer from the delay from too much processing.”

That’s the first layer of this hierarchical structure. Deeper layers handle the information in a more detailed, deliberate fashion.

“As the information travels physically, anatomically through the brain, it gets processed again and again,” said Levchenko. “But now those parts of the brain have longer memories. They can integrate information more and more. There's a very extensive range of observations telling us that when the information flows through the brain, it gets processed on different timescales. So there is this hierarchy.”

Single cells have their own complex systems known as signal transduction networks for sensing external stimuli. These networks, Levchenko says, operate in a similar fashion.

“Over the last decade or so, through our own experiments and the experiments of other labs, we are looking at these single cells,” he said. “What you find is that, like in the brain, there are many steps. And to our huge surprise, what we see in the many studies that we've done is that there's also this hierarchical system, more or less what we see in the brain also happens in the cells.”

The same is true for artificial neural networks, a critical component for many forms of AI.

“These large language models (LLM) are giving you both the context of the incoming information and also the immediate inputs with individual letters, words or sentences,” he said. The LLMs reacted quickly close to the input. Further into the network, they worked more slowly but demonstrated the ability to integrate massive amounts of information. “So we actually realized that again, many of these networks have multiple layers with different, hierarchical dynamics.”

As disparate as these networks are, Levchenko notes, it’s striking how similarly they parse the flow of information.

“It’s the same goal for all these different networks, and apparently they all evolve the same solution of how to do it,” he said. “It’s potentially very helpful because, by understanding one of them, we can understand the other a little bit better and vice versa.”

More Details

Published Date

Mar 2, 2026